Quick Key Takeaways: Worth every minute. I own shares and will add on declines.

Incredible product road map:

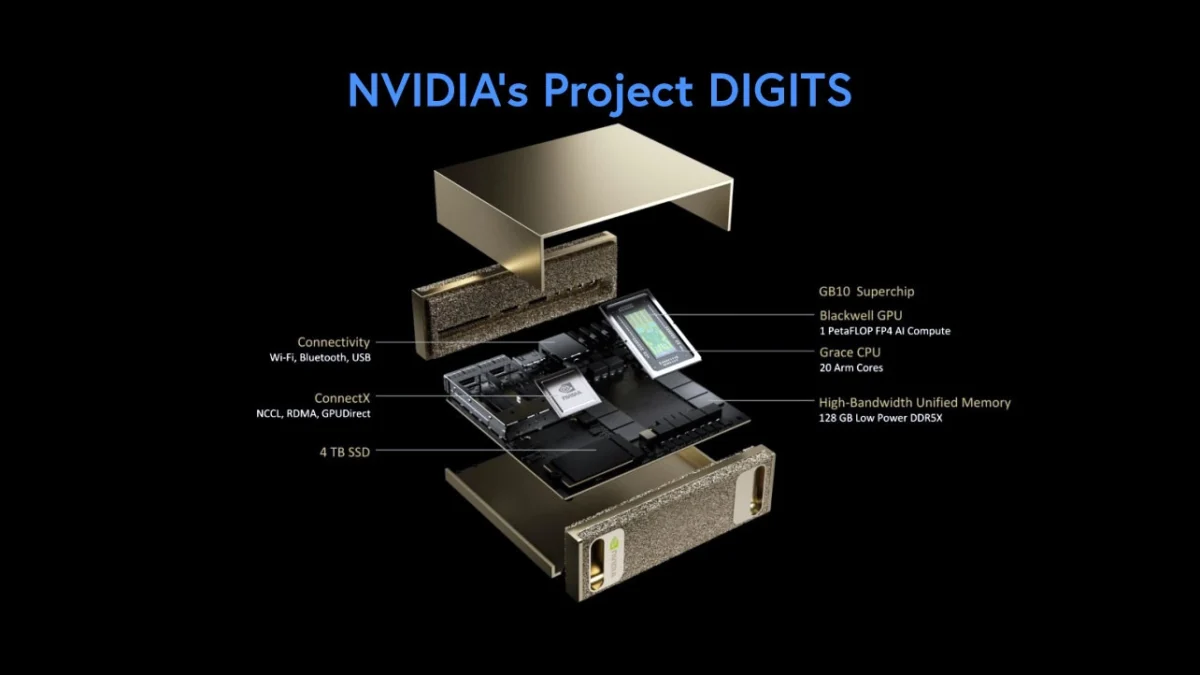

Blackwells are in full production and Blackwell NV72 is expected in 2H2025. Some may quibble about a “delay, ” as investors expected Q1 or Q2 of strong sales from NV36 and NV72, but it hardly makes a difference in the long run. In the worst-case scenario, the stock could drop 10-15%, but that should attract buying unless other macroeconomic uncertainties cause a continuous slump in the market/economy. I would back up the truck at $100.

Rubin, which is the next series of GPU systems, will be available in the second half of 2026 – again a massive leap in performance.

Nvidia (NVDA) has a 1-year upgrade cadence,

a)Nobody else has that

b) It’s across the board, GPUs, Racks, Networking, Storage, and Packaging the whole ecosystem of partners.

Nvidia’s market leadership is going to last a while – That is my main investing thesis, and I can withstand the short-term bumps.

Cost Analysis: I’m glad Jensen spoke about this in more detail and what stood out for me was a clear-cut analysis of reducing variable costs as their GPU systems get smarter and more efficient to bring total costs (TCO) down. Generating tokens to answer queries is horrendously expensive for Large Language Models, like ChatGPT and it was a black hole.

I expect costs to come down significantly, making the business model viable. Customers are expected to pay up to $3Mn for the Blackwell NV72, and it has to become profitable for them.

Omniverse, Cosmos, and Robotics, – are other focus areas to go beyond data centers. Nvidia needs other target markets, industrials, factories, automakers, and oil and gas companies to embrace AI and therefore use of their GPUs, to reduce their dependence on hyperscalers, and Jensen spent a lot of time on them. He also emphasized enterprise software partnerships, for AI, and gaining full acceptance as a ubiquitous product and making extra revenues. For Nvisia’s vision of alternate intelligent computing, we have to see more Palantirs, Service Nows, and AppLovins. In my opinion, Agentic AI will make serious inroads in 12-15 months.

I’ll add more detail in another note, once I parse the transcript in detail.

Bottom line – this is going to be an incredible journey and we’re just at the beginning; Sure it’s going to be a bumpy ride, and given the macro environment, it would be prudent to manage risks by waiting for the right entry, and taking profits when overvalued or overbought, or if you have the expertise, using other hedging mechanisms. I’m sold on Jensen’s idea of a new paradigm of accelerated and intelligent computing based on GPUs and agents. Nvidia is best positioned to take full advantage of it.